OpenCL implementation od DES cipher is way faster than regular single-threaded C program. 1 mln encryptions take less than a second on RTX 3050 Ti, but also as much as almost 40 seconds on Intel i5-10200h single-thread application.

Lack of compact and extremely portable SSL/TLS library in pre C++11 project made me think about going for something easy to implement on my own concerning data encryption. I’ve selected DES, which stands for Data Encryption Standard because of my general understanding of such algorithm. It comes from 1975 and has certain limitations including short key length or known weak keys. But if we put our prejudices aside we may see few interesting things and opportunities as well.

It took me several days to accomplish C99 kernel in OpenCL. Before this I tried few third party examples in c++. One major drawback is that all of them use strings, vectors, stringstreams and few other strictly c++ features. Even use of printf is problematic in OpenCL implementations as you may not get it or it may be working differently from implementation to implementation. You will not be able to use some of c99 features like malloc/free. So to get maximum compatibility I went down to the simplest solutions.

I especially admire example in which you use binary as strings (uchar arrays). This way you can easily see what is going on. Of course (really?) it adds complexity and increases instructions count as well as memory consumption but for the first DES implementation it is acceptable. So you will see in various places arrays of 8 byte elements meaning 64 bits of data. Keys and other values as 56 or 48 bits of data and finally halves as 32 bits values (4 byte digits). Both input and output can be displayed as characters. Input will be plain ASCII, but output come over 128 decimal ASCII code so you can see some weird characters in case of printing them instead of presenting only numbers.

OpenCL vs single threaded C

In order to run kernel code outside OpenCL runtime you need to provide few things:

#include "stdio.h"

#include <math.h>

typedef unsigned char uchar;

You need to add math library in GCC invocation because by default it is not included:

gcc des.c -lm

Then, kernel main function need to be adjusted, for instance as:

void kernel() {

// here we have the kernel function body...

}

And finally provide C language main function with iterations already parametrized:

int main() {

int i;

for (i=0; i<1024*1024; i++) {

if (i % 1024 == 0) {

printf("i: %i ", i);

}

kernel();

}

}

For sake of simplicity I skip uchar8 definition and handling as it do not add than much to overall complexity of the code and the algorithm. Running on different hardware with 1024*1024 iterations. First going to compare CPU execution time:

| Hardware | Duration |

| Dell G15: Intel Core i5 10200H 2.4 GHz | 38s |

| MacBookPro3,1: Intel Core 2 Duo 2.2 GHz | 1min 37s |

| PowerBook G4: PowerPC G4 800 MHz | 11 min 22s |

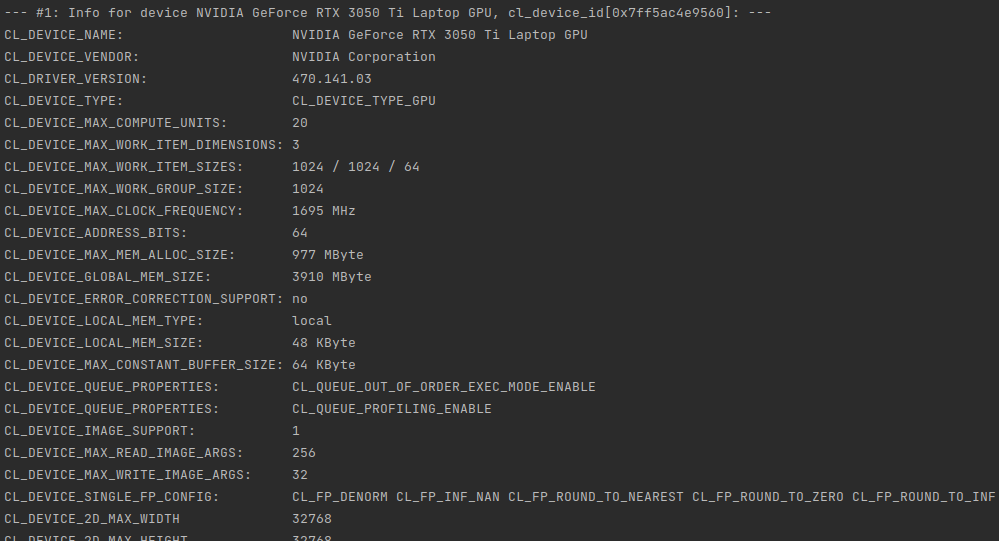

Now compare it with OpenCL runtime duration:

| Hardware | Cores No | Compute Units (acc. to OpenCL) | Duration |

| NVIDIA GeForce RTX 3050 Ti Mobile | 2560 | 20 | 930ms |

| Intel UHD 10th gen | 192 | 12 | 2065ms |

Java base project

I use OpenCL base project which can be found here. There is one additional module called “des”. Originally I used Java 17, but today I select Java 19. Frankly speaking I cannot point out easily anything that much important between Java 11 and 19. Each version introduces either small language changes or no changes at all. But if you code complex object-oriented applications then those changes might be interesting for you.

So… first I fill source data table with the following function:

public void generateSampleRandomData() {

Random rd = new Random();

for (int i = 0; i <= (n*8) - 1; i++) {

byte c = (byte)('a' + rd.nextInt(10));

srcArrayA[i] = c;

}

}

This function generates n*8 byte elements within a range of ASCII ‘a’ letter decimal representation and 10 numbers ahead. In other words random characters will be within range from ‘a’ do ‘j’ which in decimal will be from 97 to 106. One word about byte type in Java language – it is always signed so there is direct possibility to use it as unsigned. There is however Byte.toUnsignedInt function which translates negative byte numbers into positives.

Next thing is buffer. As later we will see that kernel function utilizes uchar8 data type, there is need to map such type in Java. I came with idea of using plain byte array (byte[]). Each and every kernel invocation will map consecutive groups of 8 elements from this plain array:

public void createBuffers() {

// Allocate the memory objects for the input- and output data

this.memObjects[0] = clCreateBuffer(this.context, CL_MEM_READ_ONLY | CL_MEM_COPY_HOST_PTR, (long) Sizeof.cl_uchar8 * this.n, KernelConfigurationSet.srcA, null);

this.memObjects[1] = clCreateBuffer(this.context, CL_MEM_READ_WRITE, (long) Sizeof.cl_uchar8 * this.n, null, null);

}

Both source and target arrays are type cl_uchar8 which translate into uchar8 in the kernel itself. To print results coming back from kernel invocations we will use aforemendtioned Byte.toUnsignedInt function:

public void printResults() {

System.out.println("Elements: " + dstArray.length);

int i = 0;

for (byte tmp : dstArray) {

System.out.println(String.valueOf(i) + " : " + (Byte.toUnsignedInt(tmp)) );

i++;

}

}

And that is basically all regarding Java part of this challange. Use of Java here is a matter of covenience as you may do it also using c or c++. I do not know by now about any discrepancies in JOCL library and some other libraries available.

OpenCL 1.1

In order to run kernel code on some older devices you need to adjust few things. First you need to get rid of printf function invocations or define it by yourself. Second things you need to enable floating point cl_khr_fp64 extension in case of using double type:

#pragma OPENCL EXTENSION cl_khr_fp64 : enable

There are options no to use pow function and convert the entire cipher algorithm to use bit selection. For my educational purposes however it is much easier to see what’s going on like that.

C99 kernel

I’ve divided the DES cipher algorithm into 27 functional points from FP00 to FP26. Some of them contains data only and other ones consists procedures.

General section

- FP00 – auxilliary functions (lines 1-133)

- FP01 – kernel definition

- FP02 – data and identification

- FP03 – key

- FP04 – plain text

- FP05 – PC1/PC2 tables data

Generating keys

- FP06 – PC1 key permutation

- FP07 – key division into two equal halves

- FP08 – 16 round keys, for 1, 2, 9 and 16 single left shifts

- FP09 – for other iteration double left shifts

- FP10 – combining left and right parts

- FP11 – PC2 key permutation

Encryption procedure

- FP12 – data only, initial permutation and expansion table data

- FP13 – data only, substitution boxes 8 boxes, 4 rows, 16 colums each

- FP14 – data only, permutation table

- FP15 – applying initial permutation

- FP16 – dividing result into two equal halves

- FP17 – encrypting 16 times, right laft is expanded

- FP18 – result XORed with key

- FP19 – apply 8 times substitution boxes

- FP20 – applying permutation

- FP21 – result XORed with left half

- FP22 – left and right part of plain text swapped

- FP23 – combining left and right part

- FP24 – applying inverse permutation

- FP25 – preparing final result

- FP26 – writing result to the output OpenCL buffer

Summary

In this challange… which source code can be found here I verified possibility to code DES cipher algorithm using OpenCL enabled devices. This 500 lines of code can be run either on OpenCL device or in slightly modified form on any other devices with can compile C language. OpenCL implementation runs 40 times faster on GPU than on single threaded CPU. This was kinda interesing one…